What Investors Miss When They Back "AI-First" Startups

- Jan 2

- 5 min read

Updated: Mar 30

For the past several years, “AI-first” has been one of the most powerful labels a startup can carry. It signals ambition, technical sophistication, and proximity to the center of capital gravity. To investors, it implies leverage: models improve over time, data compounds, unit economics get better, and scale feels almost automatic.

But there is a growing gap between how many AI startups are evaluated—and how they actually fail.

The uncomfortable reality is that a large share of AI startups don’t collapse because their models are weak, their datasets are insufficient, or their algorithms are inferior. They fail because once AI leaves the pitch deck and enters the physical world, a completely different set of constraints takes over. Those constraints—manufacturing, reliability, deployment—are rarely front and center during diligence. Often, they’re barely discussed at all.

These aren’t “later-stage problems.” They are foundational forces that determine whether a company can turn intelligence into something that survives contact with reality. And they are systematically underestimated when investors back “AI-first” narratives.

The Model Is Not the Product

Investor conversations around AI startups almost always start with the model. Accuracy metrics, benchmarks, latency figures, training pipelines, and architectural novelty dominate early meetings. This focus makes sense—models are tangible, comparable, and intellectually legible.

But in many AI businesses, especially those touching the physical world, the model is only one component of a much larger system. Treating it as the product creates a dangerous distortion.

As Andrew Ng famously put it, “AI is the new electricity.”

Electricity, however, only creates value once it is wired into real systems. Intelligence behaves the same way.

Once AI moves into devices, robots, vehicles, medical equipment, or industrial systems, performance metrics lose their isolation. Power budgets constrain inference. Thermal behavior throttles throughput. Sensor noise reshapes input distributions. Compute placement determines latency and safety margins. Failure modes multiply, and many of them are invisible in lab conditions.

A model that looks exceptional in controlled testing can become irrelevant—or actively harmful—when exposed to heat, vibration, electromagnetic interference, intermittent connectivity, or imperfect calibration. Intelligence that cannot be sustained continuously, predictably, and safely does not create durable value.

Yet many diligence processes implicitly assume that if the model works, the rest is “engineering.” In physical AI systems, that assumption is often fatal.

Manufacturing Is Treated as an Execution Detail—Until It Isn’t

Manufacturing is frequently treated as a downstream execution task, something that happens after product-market fit, after pilots, after scale is inevitable. The default investor assumption is that manufacturing problems are solvable with enough time, capital, and outsourcing.

That assumption directly contradicts lived experience.

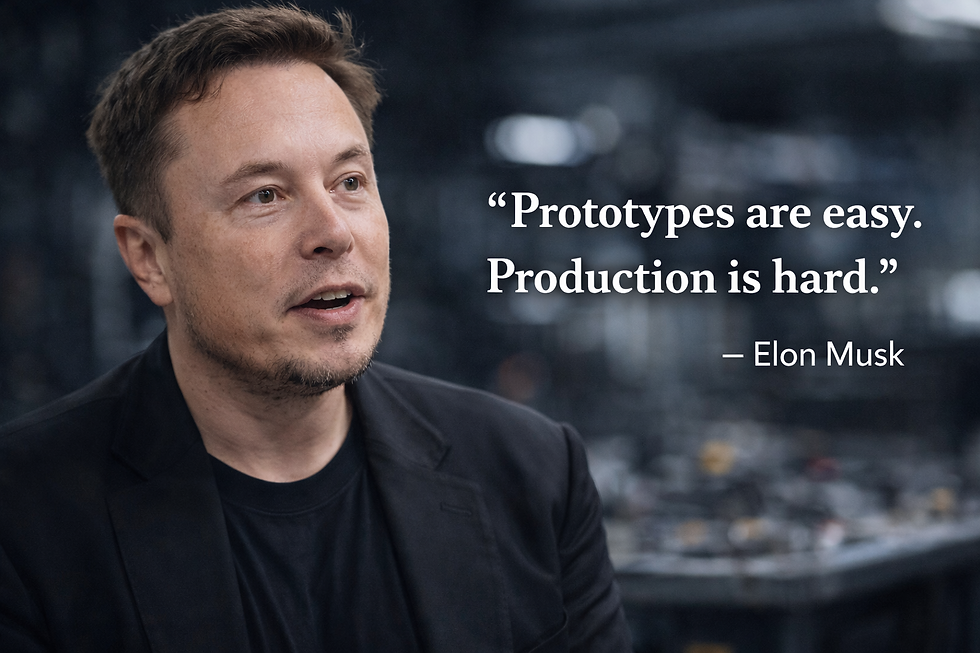

As Elon Musk once put it bluntly, “Prototypes are easy. Production is hard.”

In reality, manufacturing decisions shape outcomes far earlier—and far more rigidly—than most investors realize. Component selection determines long-term availability, cost stability, and yield. Packaging choices affect thermal performance, assembly complexity, and test coverage. Mechanical designs lock in tolerances that either enable or prevent repeatable assembly.

When startups transition from prototype to production, these decisions collide with reality. Lead times stretch from weeks to months. Minimum order quantities force early cash commitments. Yield issues emerge. Costs inflate beyond what the business model can absorb.

From the outside, this looks like execution friction. From the inside, it’s the consequence of manufacturing assumptions that were never surfaced—or diligenced—early enough.

Reliability Rarely Shows Up in a Pitch—But It Determines Survival

Reliability is one of the least visible risks in AI investing. It doesn’t demo well. It doesn’t fit neatly into a slide. It doesn’t generate excitement.

And yet, it quietly determines whether customers stay, expand, or walk away.

Systems that run perfectly for minutes during a demo may degrade under continuous operation. Heat accumulates. Components age. Sensors drift. Edge cases stop being rare and start becoming routine.

Andy Grove, former CEO of Intel, captured this reality succinctly:

“You can have all the right ideas, but without flawless execution, they don’t matter.”

In real-world deployments, reliability failures don’t announce themselves dramatically. They appear as intermittent downtime, unexplained faults, increased service calls, and erosion of trust. Fixes often require architectural changes that ripple across hardware, software, and supply chain.

Investors frequently miss reliability risk because it’s hard to quantify early and easy to assume away. But in physical AI systems, reliability debt compounds faster than technical debt—and it is far harder to unwind once products are deployed.

Deployment Is Where Economics Get Real

Even when an AI system technically works, deployment is where many business models quietly break.

Cloud inference costs scale with usage. Edge inference demands specialized hardware. Connectivity assumptions fail in the field. Installation, calibration, monitoring, and servicing consume time and money that were never modeled.

Startups often present clean unit economics based on idealized deployments. Reality introduces friction: site-specific configurations, human intervention, ongoing maintenance. Margins erode not because the AI lacks value, but because the cost of making it reliable was underestimated.

For many investors, this is the moment when a promising AI-first company stops looking scalable—not because the idea is wrong, but because deployment was never truly understood.

Why These Blind Spots Persist

These diligence gaps are not the result of carelessness. They are structural.

AI investing has been shaped by software success, where abstraction hides complexity and iteration masks fragility. The mental models that work for SaaS—deploy fast, fix later—collapse when intelligence is embodied in physical systems.

There is also a signaling problem. Startups emphasize novelty and performance because those traits attract capital. Investors reward vision and speed, not caution and constraint analysis. Manufacturing, reliability, and deployment are assumed solvable with money.

The result is a shared deferral of reality—until reality asserts itself.

The Founders Who See This Early Gain an Advantage

Some founders recognize these constraints early, and it fundamentally changes how they build.

They design systems with manufacturing in mind. They assume models will fail at the edges. They think about thermals, serviceability, and deployment from the start. They hire for systems judgment, not just algorithmic brilliance.

These teams often look slower early on. Their demos are less flashy. Their progress less legible.

But over time, they compound advantages. Redesign cycles shrink. Pilots convert. Customers trust the product. Investors stop worrying about survivability and start focusing on scale.

Seeing reality early is not a disadvantage. It is a moat.

What Better Diligence Actually Looks Like

Backing durable AI companies requires expanding the diligence lens.

It means asking not only how accurate the model is, but where it runs, how it fails, and what happens after months of continuous use. It means understanding manufacturing assumptions and deployment economics concretely, not abstractly.

It also means recognizing when a startup needs ecosystem leverage rather than just capital. No early team can master every constraint alone. Execution networks matter.

Why Execution Ecosystems Are Becoming Strategic Assets

As AI moves out of datacenters and into the physical world, execution ecosystems become decisive.

Regions with deep semiconductor expertise, hardware engineering talent, manufacturing infrastructure, and operational discipline compress learning cycles. They surface failure modes early and make tradeoffs explicit before they become existential.

Execution ecosystems are not just supply chains.

They are risk-management engines.

The Real Question Investors Should Be Asking

The next generation of AI winners will not be defined by who trains the biggest models or tops the latest benchmark.

They will be defined by who can deploy intelligence reliably, economically, and at scale—outside the lab, outside the cloud, and inside the real world.

The most important diligence question is no longer, “Is this startup AI-first?”

It is: “Is this startup built to survive reality?”

Because intelligence alone does not create value.

Execution does.

Where Taiwan Innovation Meets Silicon Valley Capital

If this is a topic that interests you, be sure to join us in person in Silicon Valley — Taiwan Innovation Spotlight is an exciting, high-signal gathering featuring a curated showcase of breakthrough companies and the people building what’s next, bringing together Taiwan’s top innovators with Silicon Valley’s leading investors and ecosystem builders.

Join us on May 8, 2026 at 6PM in Mountain View, California, and experience firsthand why Sparknify is known as one of Silicon Valley’s best event hosts—this is not one to miss.

Comments